Lawmakers and regulators in Washington are starting to puzzle over how to regulate artificial intelligence in health care — and the AI industry thinks there’s a good chance they’ll mess it up.

“It’s an incredibly daunting problem,” said Bob Wachter, the chair of the Department of Medicine at the University of California-San Francisco. “There’s a risk we come in with guns blazing and overregulate.”

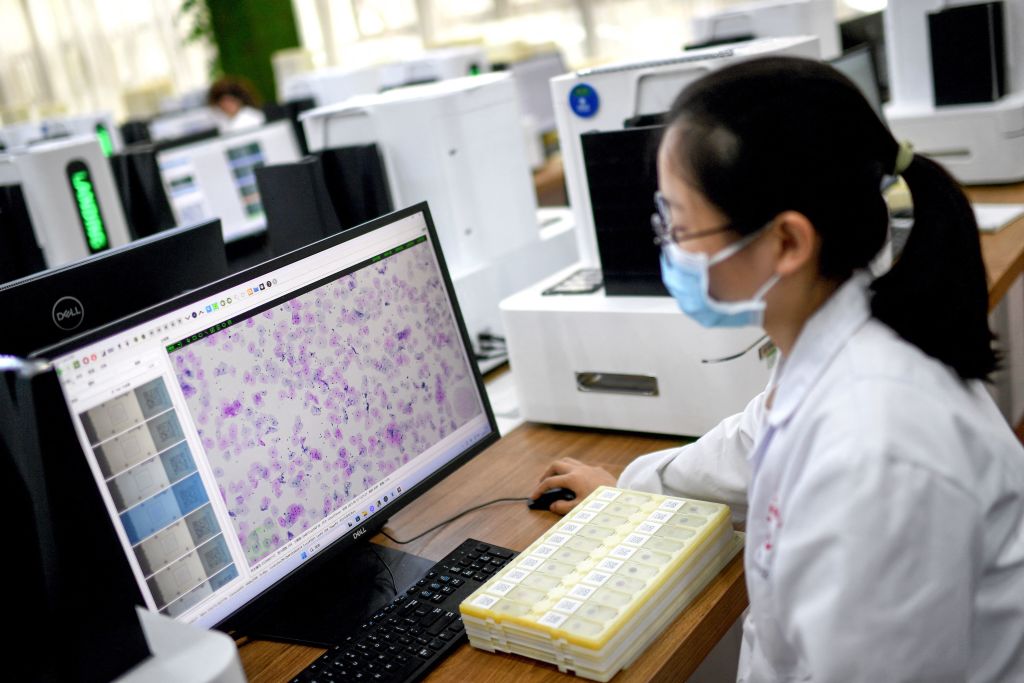

Already, AI’s impact on health care is widespread. The Food and Drug Administration has approved some 692 AI products. Algorithms are helping to schedule patients, determine staffing levels in emergency rooms, and even transcribe and summarize clinical visits to save physicians’ time. They’re starting to help radiologists read MRIs and X-rays. Wachter said he sometimes informally consults a version of GPT-4, a large language model from the company OpenAI, for complex cases.

The scope of AI’s impact — and the potential for future changes — means government is already playing catch-up.

“Policymakers are terribly behind the times,” Michael Yang, senior managing partner at OMERS Ventures, a venture capital firm, said in an email. Yang’s peers have made vast investments in the sector. Rock Health, a venture capital firm, says financiers have put nearly $28 billion into digital health firms specializing in artificial intelligence.

One issue regulators are grappling with, Wachter said, is that, unlike drugs, which will have the same chemistry five years from now as they do today, AI changes over time. But governance is forming, with the White House and multiple health-focused agencies developing rules to ensure transparency and privacy. Congress is also flashing interest. The Senate Finance Committee held a hearing Feb. 8 on AI in health care.

Along with regulation and legislation comes increased lobbying. CNBC counted a 185% surge in the number of organizations disclosing AI lobbying activities…

Read the full article here